- cross-posted to:

- technews@radiation.party

- cross-posted to:

- technews@radiation.party

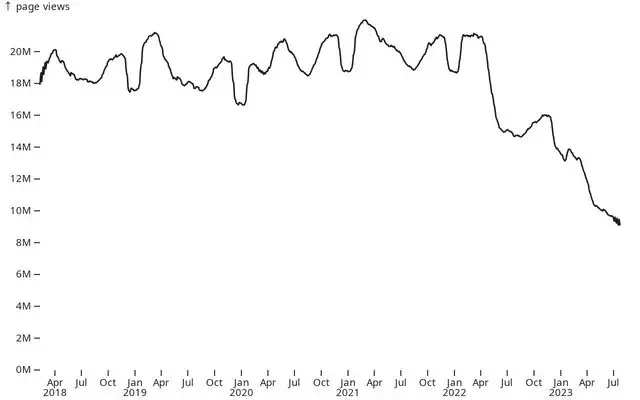

Over the past one and a half years, Stack Overflow has lost around 50% of its traffic. This decline is similarly reflected in site usage, with approximately a 50% decrease in the number of questions and answers, as well as the number of votes these posts receive.

The charts below show the usage represented by a moving average of 49 days.

What happened?

@magic_lobster_party I can’t believe someone wrote that. Incorrect answers do more harm than being useful. If the person asks and don’t know, how should he or she know it’s incorrect and look for a hint?

I don’t know about others’ experiences, but I’ve been completely stuck on problems I only figured out how to solve with chatGPT. It’s very forgiving when I don’t know the name of something I’m trying to do or don’t know how to phrase it well, so even if the actual answer is wrong it gives me somewhere to start and clues me in to the terminology to use.

In the context of coding it can be valuable. I produced two tables in a database and asked it to write a query and it did 90% of the job. It was using an incorrect column for a join. If you are doing it for coding you should notice very quickly what is wrong at least if you have experience.

Google the provided solution for additional sources. Often when I search for solutions to problems I don’t get the right answer directly. Often the provided solution may not even work for me.

But I might find other clues of the problem which can aid me in further research. In the end I finally have all the clues I need to find the answer to my question.

How do you Google anything when all the results are AI generated crap for generating ad revenue?

Well then I guess I have to survive with ChatGPT if the internet is so riddled with search engine optimized garbage. We’re thankfully not there yet, at least not with computer tech questions.

In my experience, with both coding and natural sciences, a slightly incorrect answer that you attempt to apply, realize is wrong in some way during initial testing/analysis, then you tweak until it’s correct, is very useful, especially compared to not receiving any answer or being ridiculed by internet randos.

Well if they refer to coding solution they’re right : sometimes non-working code can lead to a working solution. if you know what you’re doing ofc

Even if you don’t know what you’re doing ChatGPT can still do well if you tell it what went wrong with the suggestion it gave you. It can debug its code or realize that it made wrong assumptions about what you were asking from further context.